I’ve been making quick maple lemonade — 1:1 mix of lemon juice and maple diluted with water to taste. For me, that’s 1T of lemon juice, 1T of maple syrup, mixed into a large glass of water.

Author: Lisa

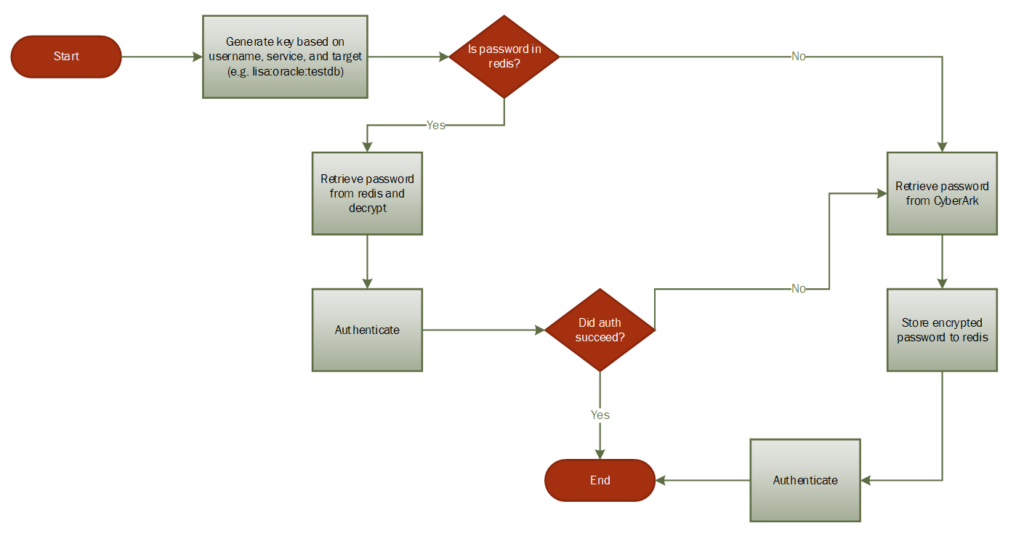

Cyberark Performance Improvement Proposal – In-memory caching

Issue: The multi-step process of retrieving credentials from CyberArk introduce noticeable latency on web tools that utilize multiple passwords. This occurs each execution cycle (scheduled task or user access).

Proposal: We will use a redis server to cache credentials retrieved from CyberArk. This will allow quick access of frequently used passwords and reduce latency when multiple users access a tool.

Details:

A redis server will be installed on both the production and development web servers. The redis implementation will be bound to localhost, and communication with the server will be encrypted using the same SSL certificate used on the web server.

Data stored in redis will be encrypted using libsodium. The key and nonce will be stored in a file on the application server.

All password retrievals will follow this basic process:

Outstanding questions:

- Using a namespace for the username key increases storage requirement. We could, instead, use allocate individual ‘databases’ for specific services. I.E. use database 1 for all Oracle passwords, use database 2 for all FTP passwords, use database 3 for all web service passwords. This would reduce the length of the key string.

- Data retention. How long should cached data live? There’s a memory limit, and I elected to use a least frequently used algorithm to prune data if that limit is reached. That means a record that’s fused once an hour ago may well age out before a frequently used cred that’s been on the server for a few hours. There’s also a FIFO pruning, but I think we will have a handful of really frequently used credentials that we want to keep around as much as possible.Basically infinite retention with low memory allocation – we could significantly limit the amount of memory that can be used to store credentials and have a high (week? weeks?) expiry period on cached data.Or we could have the cache expire more quickly – a day? A few hours? The biggest drawback I see with a long expiry period is that we’re retaining bad data for some time after a password is changed. I conceptualized a process where we’d want to handle authentication failure by getting the password directly from CyberArk and update the redis cache – which minimizes the risk of keeping the cached data for a long time.

- How do we want to encrypt/decrypt stashed data? I used libsodium because it’s something I used before (and it’s simple) – does anyone have a particular fav method?

- Anyone have an opinion on SSL session caching

################################## MODULES ##################################### # No additional modules are loaded ################################## NETWORK ##################################### # My web server is on a different host, so I needed to bind to the public # network interface. I think we'd *want* to bind to localhost in our # use case. # bind 127.0.0.1 # Similarly, I think we'd want 'yes' here protected-mode no # Might want to use 0 to disable listening on the unsecure port port 6379 tcp-backlog 511 timeout 10 tcp-keepalive 300 ################################# TLS/SSL ##################################### tls-port 6380 tls-cert-file /opt/redis/ssl/memcache.pem tls-key-file /opt/redis/ssl/memcache.key tls-ca-cert-dir /opt/redis/ssl/ca # I am not auth'ing clients for simplicity tls-auth-clients no tls-auth-clients optional tls-protocols "TLSv1.2 TLSv1.3" tls-prefer-server-ciphers yes tls-session-caching no # These would only be set if we were setting up replication / clustering # tls-replication yes # tls-cluster yes ################################# GENERAL ##################################### # This is for docker, we may want to use something like systemd here. daemonize no supervised no #loglevel debug loglevel notice logfile "/var/log/redis.log" syslog-enabled yes syslog-ident redis syslog-facility local0 # 1 might be sufficient -- we *could* partition different apps into different databases # But I'm thinking, if our keys are basically "user:target:service" ... then report_user:RADD:Oracle # from any web tool would be the same cred. In which case, one database suffices. databases 3 ################################ SNAPSHOTTING ################################ save 900 1 save 300 10 save 60 10000 stop-writes-on-bgsave-error yes rdbcompression yes rdbchecksum yes dbfilename dump.rdb # dir ./ ################################## SECURITY ################################### # I wasn't setting up any sort of authentication and just using the facts that # (1) you are on localhost and # (2) you have the key to decrypt the stuff we stash # to mean you are authorized. ############################## MEMORY MANAGEMENT ################################ # This is what to evict from the dataset when memory is maxed maxmemory-policy volatile-lfu ############################# LAZY FREEING #################################### lazyfree-lazy-eviction no lazyfree-lazy-expire no lazyfree-lazy-server-del no replica-lazy-flush no lazyfree-lazy-user-del no ############################ KERNEL OOM CONTROL ############################## oom-score-adj no ############################## APPEND ONLY MODE ############################### appendonly no appendfsync everysec no-appendfsync-on-rewrite no auto-aof-rewrite-percentage 100 auto-aof-rewrite-min-size 64mb aof-load-truncated yes aof-use-rdb-preamble yes ############################### ADVANCED CONFIG ############################### hash-max-ziplist-entries 512 hash-max-ziplist-value 64 list-max-ziplist-size -2 list-compress-depth 0 set-max-intset-entries 512 zset-max-ziplist-entries 128 zset-max-ziplist-value 64 hll-sparse-max-bytes 3000 stream-node-max-bytes 4096 stream-node-max-entries 100 activerehashing yes client-output-buffer-limit normal 0 0 0 client-output-buffer-limit replica 256mb 64mb 60 client-output-buffer-limit pubsub 32mb 8mb 60 dynamic-hz yes aof-rewrite-incremental-fsync yes rdb-save-incremental-fsync yes ########################### ACTIVE DEFRAGMENTATION ####################### # Enabled active defragmentation activedefrag no # Minimum amount of fragmentation waste to start active defrag active-defrag-ignore-bytes 100mb # Minimum percentage of fragmentation to start active defrag active-defrag-threshold-lower 10

Setting up redis sandbox

To set up my redis sandbox in Docker, I created two folders — conf and data. The conf will house the SSL stuff and configuration file. The data directory is used to store the redis data.

I first needed to generate a SSL certificate. The public and private keys of the pair are stored in a pem and key file. The public key of the CA that signed the cert is stored in a “ca” folder.

Then I created a redis configuation file — note that the paths are relative to the Docker container

################################## MODULES #####################################

################################## NETWORK #####################################

# My web server is on a different host, so I needed to bind to the public

# network interface. I think we'd *want* to bind to localhost in our

# use case.

# bind 127.0.0.1

# Similarly, I think we'd want 'yes' here

protected-mode no

# Might want to use 0 to disable listening on the unsecure port

port 6379

tcp-backlog 511

timeout 10

tcp-keepalive 300

################################# TLS/SSL #####################################

tls-port 6380

tls-cert-file /opt/redis/ssl/memcache.pem

tls-key-file /opt/redis/ssl/memcache.key

tls-ca-cert-dir /opt/redis/ssl/ca

# I am not auth'ing clients for simplicity

tls-auth-clients no

tls-auth-clients optional

tls-protocols "TLSv1.2 TLSv1.3"

tls-prefer-server-ciphers yes

tls-session-caching no

# These would only be set if we were setting up replication / clustering

# tls-replication yes

# tls-cluster yes

################################# GENERAL #####################################

# This is for docker, we may want to use something like systemd here.

daemonize no

supervised no

#loglevel debug

loglevel notice

logfile "/var/log/redis.log"

syslog-enabled yes

syslog-ident redis

syslog-facility local0

# 1 might be sufficient -- we *could* partition different apps into different databases

# But I'm thinking, if our keys are basically "user:target:service" ... then report_user:RADD:Oracle

# from any web tool would be the same cred. In which case, one database suffices.

databases 3

################################ SNAPSHOTTING ################################

save 900 1

save 300 10

save 60 10000

stop-writes-on-bgsave-error yes

rdbcompression yes

rdbchecksum yes

dbfilename dump.rdb

#

dir ./

################################## SECURITY ###################################

# I wasn't setting up any sort of authentication and just using the facts that

# (1) you are on localhost and

# (2) you have the key to decrypt the stuff we stash

# to mean you are authorized.

############################## MEMORY MANAGEMENT ################################

# This is what to evict from the dataset when memory is maxed

maxmemory-policy volatile-lfu

############################# LAZY FREEING ####################################

lazyfree-lazy-eviction no

lazyfree-lazy-expire no

lazyfree-lazy-server-del no

replica-lazy-flush no

lazyfree-lazy-user-del no

############################ KERNEL OOM CONTROL ##############################

oom-score-adj no

############################## APPEND ONLY MODE ###############################

appendonly no

appendfsync everysec

no-appendfsync-on-rewrite no

auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb

aof-load-truncated yes

aof-use-rdb-preamble yes

############################### ADVANCED CONFIG ###############################

hash-max-ziplist-entries 512

hash-max-ziplist-value 64

list-max-ziplist-size -2

list-compress-depth 0

set-max-intset-entries 512

zset-max-ziplist-entries 128

zset-max-ziplist-value 64

hll-sparse-max-bytes 3000

stream-node-max-bytes 4096

stream-node-max-entries 100

activerehashing yes

client-output-buffer-limit normal 0 0 0

client-output-buffer-limit replica 256mb 64mb 60

client-output-buffer-limit pubsub 32mb 8mb 60

dynamic-hz yes

aof-rewrite-incremental-fsync yes

rdb-save-incremental-fsync yes

########################### ACTIVE DEFRAGMENTATION #######################

# Enabled active defragmentation

activedefrag no

# Minimum amount of fragmentation waste to start active defrag

active-defrag-ignore-bytes 100mb

# Minimum percentage of fragmentation to start active defrag

active-defrag-threshold-lower 10

Once I had the configuration data set up, I created the container. I’m using port 6380 for the SSL connection. For the sandbox, I also exposed the clear text port. I mapped volumes for both the redis data, the SSL files, and the redis.conf file

docker run --name redis-srv -p 6380:6380 -p 6379:6379 -v /d/docker/redis/conf/ssl:/opt/redis/ssl -v /d/docker/redis/data:/data -v /d/docker/redis/conf/redis.conf:/usr/local/etc/redis/redis.conf -d redis redis-server /usr/local/etc/redis/redis.conf --appendonly yes

Voila, I have a redis server ready. Quick PHP code to ensure it’s functional:

<?php

$sodiumKey = random_bytes(SODIUM_CRYPTO_SECRETBOX_KEYBYTES); // 256 bit

$sodiumNonce = random_bytes(SODIUM_CRYPTO_SECRETBOX_NONCEBYTES); // 24 bytes

#print "Key:\n";

#print sodium_bin2hex($sodiumKey);

#print"\n\nNonce:\n";

#print sodium_bin2hex($sodiumNonce);

#print "\n\n";

$redis = new Redis();

$redis->connect('tls://memcached.example.com', 6380); // enable TLS

//check whether server is running or not

echo "<PRE>Server is running: ".$redis->ping()."\n</pre>";

$checks = array(

"credValueGoesHere",

"cred2",

"cred3",

"cred4",

"cred5"

);

#$ciphertext = safeEncrypt($message, $key);

#$plaintext = safeDecrypt($ciphertext, $key);

foreach ($checks as $i => $value) {

usleep(100);

$key = 'credtest' . $i;

$strCryptedValue = base64_encode(sodium_crypto_secretbox($value, $sodiumNonce, $sodiumKey));

$redis->setEx($key, 1800, $strCryptedValue); // 30 minute timeout

}

echo "<UL>\n";

for($i = 0; $i < count($checks); $i++){

$key = 'credtest'.$i;

$strValue = sodium_crypto_secretbox_open(base64_decode($redis->get($key)),$sodiumNonce, $sodiumKey);

echo "<LI>The value on key $key is: $strValue \n";

}

echo "</UL>\n";

echo "<P>\n";

echo "<P>\n";

echo "<UL>\n";

$objAllKeys = $redis->keys('*'); // all keys will match this.

foreach($objAllKeys as $objKey){

print "<LI>The key $objKey has a TTL of " . $redis->ttl($objKey) . "\n";

}

echo "</UL>\n";

foreach ($checks as $i => $value) {

usleep(100);

$value = $value . "-updated";

$key = 'credtest' . $i;

$strCryptedValue = base64_encode(sodium_crypto_secretbox($value, $sodiumNonce, $sodiumKey));

$redis->setEx($key, 60, $strCryptedValue); // 1 minute timeout

}

echo "<UL>\n";

for($i = 0; $i < count($checks); $i++){

$key = 'credtest'.$i;

$strValue = sodium_crypto_secretbox_open(base64_decode($redis->get($key)),$sodiumNonce, $sodiumKey);

echo "<LI>The value on key $key is: $strValue \n";

}

echo "</UL>\n";

echo "<P>\n";

echo "<UL>\n";

$objAllKeys = $redis->keys('*'); // all keys will match this.

foreach($objAllKeys as $objKey){

print "<LI>The key $objKey has a TTL of " . $redis->ttl($objKey) . "\n";

}

echo "</UL>\n";

foreach ($checks as $i => $value) {

usleep(100);

$value = $value . "-updated";

$key = 'credtest' . $i;

$strCryptedValue = base64_encode(sodium_crypto_secretbox($value, $sodiumNonce, $sodiumKey));

$redis->setEx($key, 1, $strCryptedValue); // 1 second timeout

}

echo "<P>\n";

echo "<UL>\n";

$objAllKeys = $redis->keys('*'); // all keys will match this.

foreach($objAllKeys as $objKey){

print "<LI>The key $objKey has a TTL of " . $redis->ttl($objKey) . "\n";

}

echo "</UL>\n";

sleep(5); // Sleep so data ages out of redis

echo "<UL>\n";

for($i = 0; $i < count($checks); $i++){

$key = 'credtest'.$i;

$strValue = sodium_crypto_secretbox_open(base64_decode($redis->get($key)),$sodiumNonce, $sodiumKey);

echo "<LI>The value on key $key is: $strValue \n";

}

echo "</UL>\n";

?>

Frozen Pizza

For some reason, frozen pizza never gets cooked right when I follow the instructions. Yes, the oven is actually at the right temp (I know not to trust the built-in thermister … but, if three different devices agree within a degree or so … I am confident that I’ve got the oven to a reasonably correct temperature!). But the middle ends up uncooked and soggy. Ugh! And cooking it for a few more minutes until the crust is actually cooked just yields burnt pizza. Also ugh!

So I did an experiment — instead of cooking the pizza at 400 for 22-24 minutes, I tried half an hour at 350 and half an hour at 375. I had to add a couple extra minutes in either case, but 34 minutes at 350 yielded a not-burnt-but-cooked frozen pizza! That’s not exactly a quick meal — if I have frozen dough defrosted, I can bake a fresh pizza at 550 for about ten minutes and have a full half-sheet of well-cooked pizza. But it’s a lazy meal — maybe three minutes of active cooking and half an hour to wash dishes or something.

Memcached with TLS in Docker

To bring up a Docker container running memcached with SSL enabled, create a local folder to hold the SSL key. Mount a volume to your local config folder, then point the ssl-chain_cert and ssl_key to the in-container paths to the PEM and KEY file:

docker run -v /d/docker/memcached/config:/opt/memcached.config -p 11211:11211 –name memcached-svr -d memcached memcached -m 64 –enable-ssl -o ssl_chain_cert=/opt/memcached.config/localcerts/memcache.pem,ssl_key=/opt/memcached.config/localcerts/memcache.key -v

Using Memcached in PHP

Quick PHP code used as a proof of concept for storing credentials in memcached — cred is encrypted using libsodium before being send to memcached, and it is decrypted after being retrieved. This is done both to prevent in-memory data from being meaningful and because the PHP memcached extension doesn’t seem to support SSL communication.

<?php

# To generate key and nonce, use sodium_bin2hex to stash these two values

#$sodiumKey = random_bytes(SODIUM_CRYPTO_SECRETBOX_KEYBYTES); // 256 bit

#$sodiumNonce = random_bytes(SODIUM_CRYPTO_SECRETBOX_NONCEBYTES); // 24 bytes

# Stashed key and nonce strings

$strSodiumKey = 'cdce35b57cdb25032e68eb14a33c8252507ae6ab1627c1c7fcc420894697bf3e';

$strSodiumNonce = '652e16224e38da20ea818a92feb9b927d756ade085d75dab';

# Turn key and nonce back into binary data

$sodiumKey = sodium_hex2bin($strSodiumKey);

$sodiumNonce = sodium_hex2bin($strSodiumNonce);

# Initiate memcached object and add sandbox server

$memcacheD = new Memcached;

$memcacheD->addServer('127.0.0.1','11211',1); # add high priority weight server added to memcacheD

$arrayDataToStore = array(

"credValueGoesHere",

"cred2",

"cred3",

"cred4",

"cred5"

);

# Encrypt and stash data

for($i = 0; $i < count($arrayDataToStore); $i++){

usleep(100);

$strValue = $arrayDataToStore[$i];

$strMemcachedKey = 'credtest' . $i;

$strCryptedValue = base64_encode(sodium_crypto_secretbox($strValue, $sodiumNonce, $sodiumKey));

$memcacheD->set($strMemcachedKey, $strCryptedValue,time()+120);

}

# Get each key and decrypt it

for($i = 0; $i < count($arrayDataToStore); $i++){

$strMemcachedKey = 'credtest'.$i;

$strValue = sodium_crypto_secretbox_open(base64_decode($memcacheD->get($strMemcachedKey)),$sodiumNonce, $sodiumKey);

echo "The value on key $strMemcachedKey is: $strValue \n";

}

?>

On Wealth

People seem to assume the fact you’ve managed to amass money to be a fact that vouches for you … like you cannot be inept / senseless / bad at managing money because, look at that, you’ve got money. Doesn’t matter if you inherited (and subsequently lost much of) a bigger load of money, managed to injure yourself in some stunningly original way that requires some company to fork over millions, tripped over your untied shoelaces and discovered the lost Civil War gold. You have money, so you’re awesome at life.

It makes me think of the towel in the Hitchhiker’s Guide series — encounter someone while you’re wandering and, if they’ve managed to keep track of something so trivial as their towel, then they’ve obviously got it together.

Updating git on Windows

There’s a convenient command to update the Windows git binary

git update-git-for-windowsWhich is useful since ADO likes to complain about old git clients —

remote: Storing packfile... done (51 ms) remote: Storing index... done (46 ms) remote: We noticed you're using an older version of Git. For the best experience, upgrade to a newer version.

Cancelling by any other name

“Go woke, go brokeGo woke, go broke” is, of course, absolutely different than ‘cancelling’. Because ‘cancelling’ is when someone the right *likes* is boycotted. This is customers speaking with their dollars!

Garlic Harvest

The garlic that was growing in the front bed for two years made some nice, large bulbs. The garlic that had been growing in the garden bed just this year, however, was pretty small. Each plant had a couple of cloves, but I think there was too much clay in the soil still. And growth was stunted. Or growing for two years help a lot!

I ordered more garlic for next year so we can expand the bed: 1 lb of German Extra Hardy, 1 lb of Chesnok Red, and 1 lb of Music. Also got half a pound of dutch red shallots and half a pound of French gray shallots.