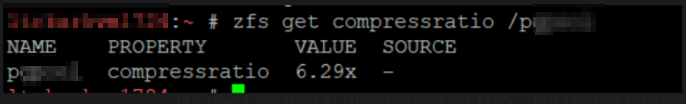

We’ve got several PostgreSQL servers using ZFS file system for the database, and I needed to know how compressed the data is. Fortunately, there appears to be a zfs command that does exactly that: report the compression ratio for a zfs file system. Use zfs get compressratio /path/to/mount

Category: Technology

Web Proxy Auto Discovery (WPAD) DNS Failure

I wanted to set up automatic proxy discovery on our home network — but it just didn’t work. The website is there, it looks fine … but it doesn’t work. Turns out Microsoft introduced some security idea in Windows 2008 that prevents Windows DNS servers from serving specific names. They “banned” Web Proxy Auto Discovery (WPAD) and Intra-site Automatic Tunnel Addressing Protocol (ISATAP). Even if you’ve got a valid wpad.example.com host recorded in your domain, Windows DNS server says “Nope, no such thing!”. I guess I can appreciate the logic — some malicious actor can hijack all of your connections by tunnelling or proxying your traffic. But … doesn’t the fact I bothered to manually create a hostname kind of clue you into the fact I am trying to do this?!?

I gave up and added the proxy config to my group policy — a few computers, then, needed to be manually configured. It worked. Looking in the event log for a completely different problem, I saw the following entry:

Event ID 6268

The global query block list is a feature that prevents attacks on your network by blocking DNS queries for specific host names. This feature has caused the DNS server to fail a query with error code NAME ERROR for wpad.example.com. even though data for this DNS name exists in the DNS database. Other queries in all locally authoritative zones for other names

that begin with labels in the block list will also fail, but no event will be logged when further queries are blocked until the DNS server service on this computer is restarted. See product documentation for information about this feature and instructions on how to configure it.

The oddest bit is that this appears to be a substring ‘starts with’ query — like wpadlet or wpadding would also fail? A quick search produced documentation on this Global Query Blocklist … and two quick ways to resolve the issue.

(1) Change the block list to contain only the services you don’t want to use. I don’t use ISATAP, so blocking isatap* hostnames isn’t problematic:

dnscmd /config /globalqueryblocklist isatap

View the current blocklist with:

dnscmd /info /globalqueryblocklist

– Or –

(2) Disable the block list — more risk, but it avoids having to figure this all out again in a few years when a hostname starting with isatap doesn’t work for no reason!

dnscmd /config /enableglobalqueryblocklist 0

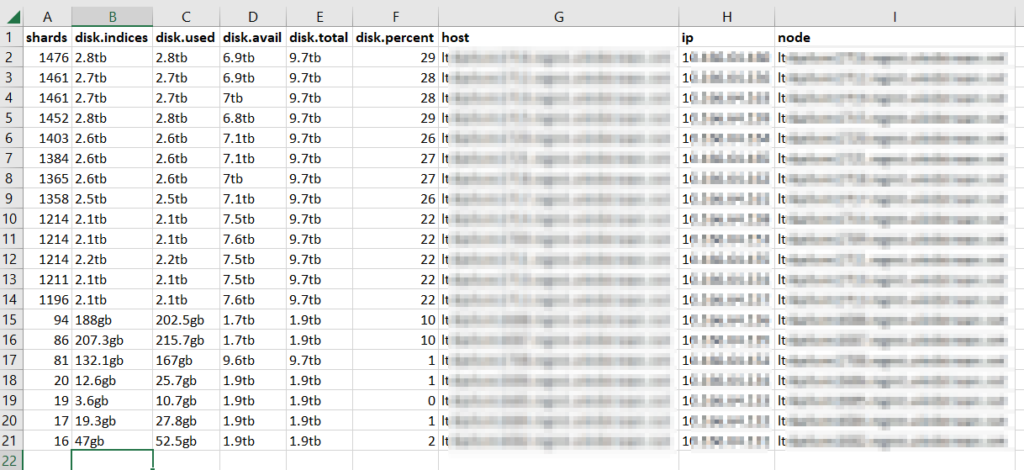

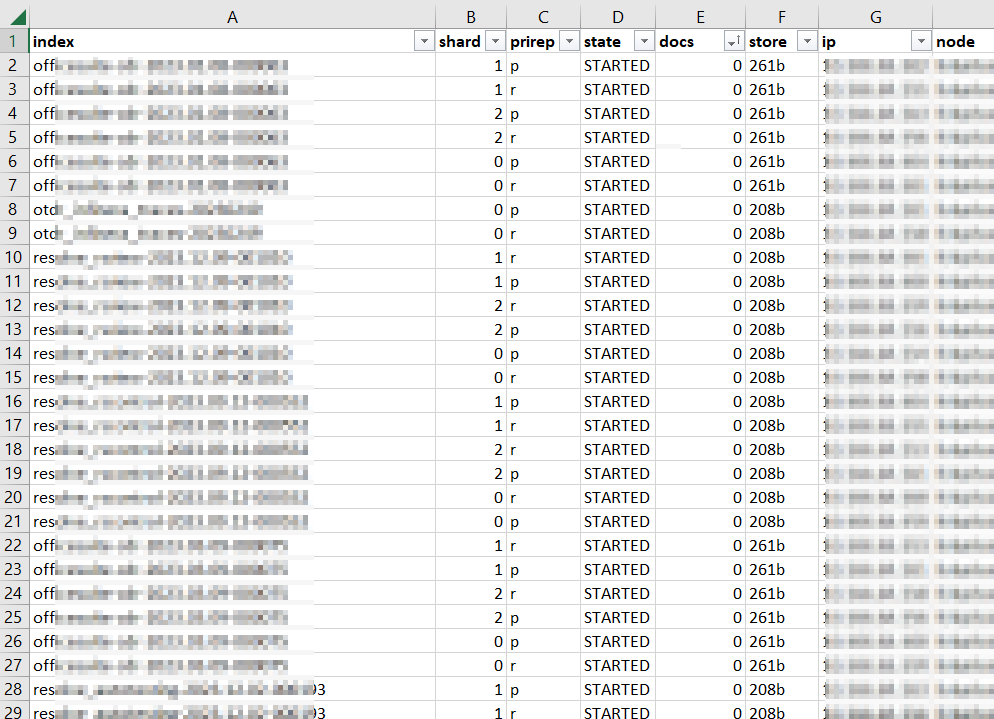

ElasticSearch — Too Many Shards

Our ElasticSearch environment melted down in a fairly spectacular fashion — evidently (at least in older iterations), it’s an unhandled Java exception when a server is trying to send data over to another server that is refusing it because that would put the receiver over the shard limit. So we didn’t just have a server or three go into read only mode — we had cascading failure where java would except out and the process was dead. Restarting the ElasticSearch service temporarily restored functionality — so I quickly increased the max shards per node limit to keep the system up whilst I cleaned up whatever I could clean up

curl -X PUT http://uid:pass@`hostname`:9200/_cluster/settings -H "Content-Type: application/json" -d '{ "persistent": { "cluster.max_shards_per_node": "5000" } }'

There were two requests against the ES API that were helpful in cleaning ‘stuff’ up — GET /_cat/allocation?v returns a list of each node in the ES cluster with a count of shards (plus disk space) being used. This was useful in confirming that load across ‘hot’, ‘warm’, and ‘cold’ nodes was reasonable. If it was not, we would want to investigate why some nodes were under-allocated. We were, however, fine.

The second request: GET /_cat/shards?v=true which dumps out all of the shards that comprise the stored data. In my case, a lot of clients create a new index daily — MyApp-20231215 — and then proceeded to add absolutely nothing to that index. Literally 10% of our shards were devoted to storing zero documents! Well, that’s silly. I created a quick script to remove any zero-document index that is older than a week. A new document coming in will create the index again, and we don’t need to waste shards not storing data.

Once you’ve cleaned up the shards, it’s a good idea to drop your shard-per-node configuration down again. I’m also putting together a script to run through the allocated shards per node data to alert us when allocation is unbalanced or when total shards approach our limit. Hopefully this will allow us to proactively reduce shards instead of having the entire cluster fall over one night.

DIFF’ing JSON

While a locally processed web tool like https://github.com/zgrossbart/jdd can be used to identify differences between two JSON files, regular diff can be used from the command line for simple comparisons. Using jq to sort JSON keys, diff will highlight (pipe bars between the two columns, in this example) where differences appear between two JSON files. Since they keys are sorted, content order doesn’t matter much — it’s possible you’d have a list element 1,2,3 in one and 2,1,3 in another, which wouldn’t be sorted.

[lisa@fedorahost ~]# diff -y <(jq --sort-keys . 1.json) <(jq --sort-keys . 2.json )

{ {

"glossary": { "glossary": {

"GlossDiv": { "GlossDiv": {

"GlossList": { "GlossList": {

"GlossEntry": { "GlossEntry": {

"Abbrev": "ISO 8879:1986", "Abbrev": "ISO 8879:1986",

"Acronym": "SGML", | "Acronym": "XGML",

"GlossDef": { "GlossDef": {

"GlossSeeAlso": [ "GlossSeeAlso": [

"GML", "GML",

"XML" "XML"

], ],

"para": "A meta-markup language, used to create m "para": "A meta-markup language, used to create m

}, },

"GlossSee": "markup", "GlossSee": "markup",

"GlossTerm": "Standard Generalized Markup Language" "GlossTerm": "Standard Generalized Markup Language"

"ID": "SGML", "ID": "SGML",

"SortAs": "SGML" | "SortAs": "XGML"

} }

}, },

"title": "S" "title": "S"

}, },

"title": "example glossary" "title": "example glossary"

} }

} }

Bulk Download of YouTube Videos from Channel

Several years ago, I started recording our Township meetings and posting them to YouTube. This was very helpful — even our government officials used the recordings to refresh their memory about what happened in a meeting. But it also led people to ask “why, exactly, are we relying on some random citizen to provide this service? What if they are busy? Or move?!” … and the Township created their own channel and posted their meeting recordings. This was a great way to promote transparency however they’ve got retention policies. Since we have absolutely been at meetings where it would be very helpful to know what happened five, ten, forty!! years ago … my expectation is that these videos will be useful far beyond the allotted document retention period.

We decided to keep our channel around with the historic archive of government meeting recordings. There’s no longer time criticality — anyone who wants to see a current meeting can just use the township’s channel. We have a script that lists all of the videos from the township’s channel and downloads them — once I complete back-filling our archive, I will modify the script to stop once it reaches a video series we already have. But this quick script will list all videos published to a channel and download the highest quality MP4 file associated with that video.

# API key for my Google Developer project

strAPIKey = '<CHANGEIT>'

# Youtube account channel ID

strChannelID = '<CHANGEIT>'

import os

from time import sleep

import urllib

from urllib.request import urlopen

import json

from pytube import YouTube

import datetime

from config import dateLastDownloaded

os.chdir(os.path.dirname(os.path.abspath(__file__)))

print(os.getcwd())

strBaseVideoURL = 'https://www.youtube.com/watch?v='

strSearchAPIv3URL= 'https://www.googleapis.com/youtube/v3/search?'

iStart = 0 # Not used -- included to allow skipping first N files when batch fails midway

iProcessed = 0 # Just a counter

strStartURL = f"{strSearchAPIv3URL}key={strAPIKey}&channelId={strChannelID}&part=snippet,id&order=date&maxResults=50"

strYoutubeURL = strStartURL

while True:

inp = urllib.request.urlopen(strYoutubeURL)

resp = json.load(inp)

for i in resp['items']:

if i['id']['kind'] == "youtube#video":

iDaysSinceLastDownload = datetime.datetime.strptime(i['snippet']['publishTime'], "%Y-%m-%dT%H:%M:%SZ") - dateLastDownloaded

# If video was posted since last run time, download the video

if iDaysSinceLastDownload.days >= 0:

strFileName = (i['snippet']['title']).replace('/','-').replace(' ','_')

print(f"{iProcessed}\tDownloading file {strFileName} from {strBaseVideoURL}{i['id']['videoId']}")

# Need to retrieve a youtube object and filter for the *highest* resolution otherwise we get blurry videos

if iProcessed >= iStart:

yt = YouTube(f"{strBaseVideoURL}{i['id']['videoId']}")

yt.streams.filter(progressive=True, file_extension='mp4').order_by('resolution').desc().first().download(filename=f"{strFileName}.mp4")

sleep(90)

iProcessed = iProcessed + 1

try:

next_page_token = resp['nextPageToken']

strYoutubeURL = strStartURL + '&pageToken={}'.format(next_page_token)

print(f"Now getting next page from {strYoutubeURL}")

except:

break

# Update config.py with last run date

f = open("config.py","w")

f.write("import datetime\n")

f.write(f"dateLastDownloaded = datetime.datetime({datetime.datetime.now().year},{datetime.datetime.now().month},{datetime.datetime.now().day},0,0,0)")

f.close

Tableau: Upgrading from 2022.3.x to 2023.3.0

A.K.A. I upgraded and now my site has no content?!? Attempting to test the upgrade to 2023.3.0 in our development environment, the site was absolutely empty after the upgrade completed. No errors, nothing indicating something went wrong. Just nothing in the web page where I would expect to see data sources, workbooks, etc. The database still had a lot of ‘stuff’, the disk still had hundreds of gigs of ‘stuff’. But nothing showed up. I have experienced this problem starting with 2022.3.5 or 2022.3.11 and upgrading to 2023.3.0. I could upgrade to 2023.1.x and still have site content.

I wasn’t doing anything peculiar during the upgrade:

- Run TableauServerTabcmd-64bit-2023-3-0.exe to upgrade the CLI

- Run TableauServer-64bit-2023-3-0.exe to upgrade the Tableau binaries

- Once installation completes, run open a new command prompt with Run as Administrator and launch “.\Tableau\Tableau Server\packages\scripts.20233.23.1017.0948\upgrade-tsm.cmd” –username username

The upgrade-tsm batch upgrades all of the components and database content. At this point, the server will be stopped. Start it. Verify everything looks OK – site is online, SSL is right, I can log in. Check out the site data … it’s not there!

Reportedly this is a known bug that only impacts systems that have been restored from backup. Since all of my servers were moved from Windows 2012 to Windows 2019 by backing up and restoring the Tableau sites … that’d be all of ’em! Fortunately, it is easy enough to make the data visible again. Run tsm maintenance reindex-search to recreate the search index. Refresh the user site, and there will be workbooks, data, jobs, and all sorts of things.

If reindexing does not sort the problem, tsm maintenance reset-searchserver should do it. The search reindex sorted me, though.

Mass Spec

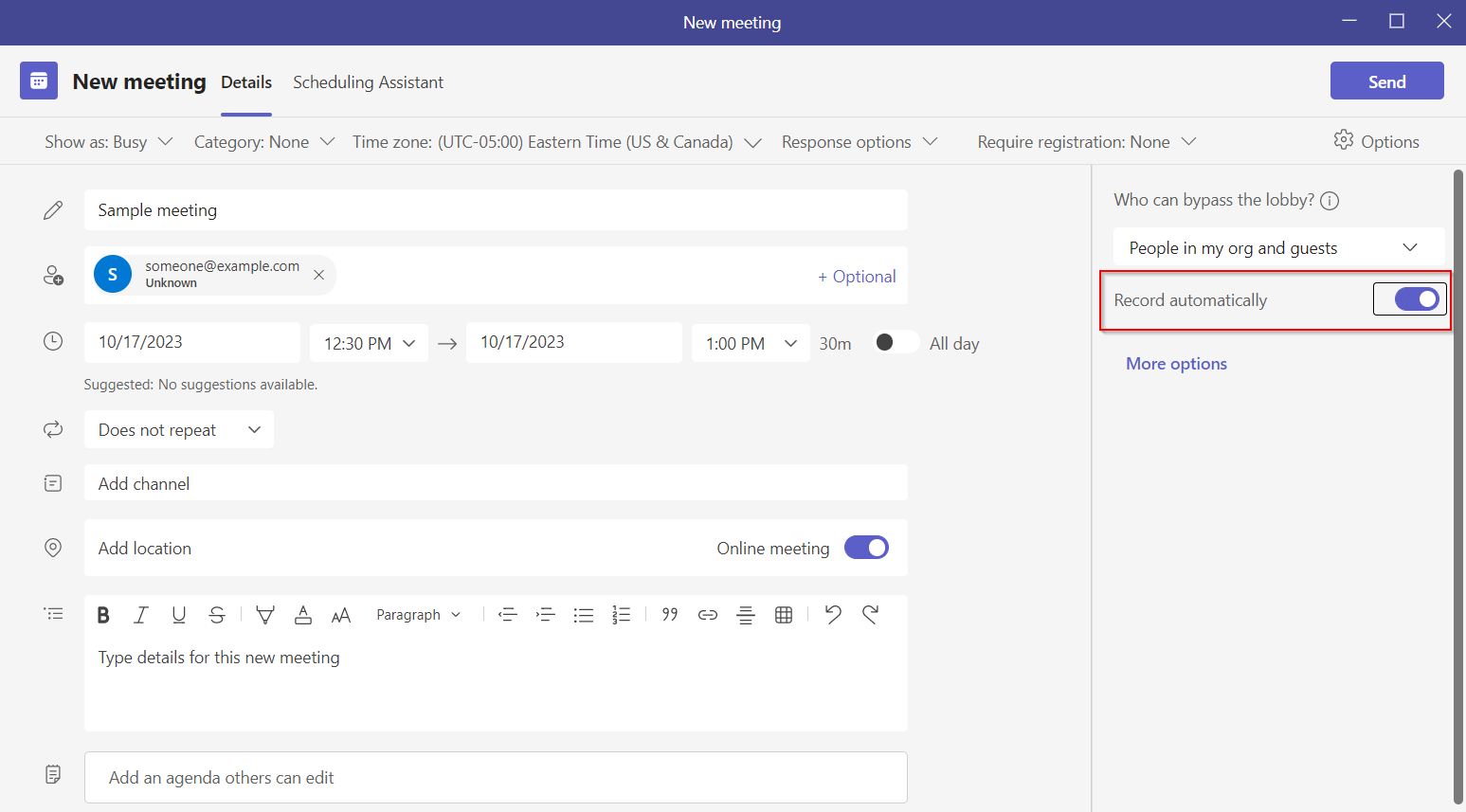

Did you know … Teams can automatically record meetings you schedule?

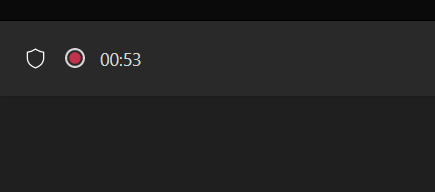

I don’t record all of my meetings — I probably don’t even record most of my meetings. But I schedule the occasional training session. And it really sucks when no one remembers to start recording … and we realize we missed the first fifteen minutes or so. Luckily, Teams has added an option to automatically record a meeting when it starts. No needing to remember to click record. No worrying that no one else thinks to kick off the recording if you are a bit late. When scheduling a meeting through Teams, there are a few settings on the right-hand side of the new meeting form. Simply toggle ‘Record automatically’ to on.

Voila — when I start the meeting, it immediately starts recording.

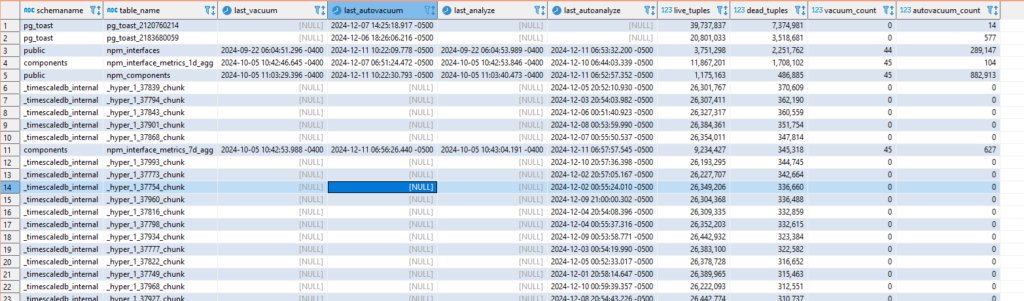

PostgreSQL – Vacuum and Analyze Stats for Tables

This query retrieves information about the health of PostgreSQL tables

SELECT

schemaname,

relname AS table_name,

last_vacuum,

last_autovacuum,

last_analyze,

last_autoanalyze,

n_live_tup AS live_tuples,

n_dead_tup AS dead_tuples,

vacuum_count,

autovacuum_count

FROM

pg_stat_all_tables

WHERE

schemaname NOT IN ('pg_catalog', 'information_schema')

and n_dead_tup > 0

ORDER BY

n_dead_tup DESC;

Voila:

Linux – High Load with CIFS Mounts using Kernel 6.5.5

We recently updated our Fedora servers from 36 and 37 to 38. Since the upgrade, we have observed servers with very high load averages – 8+ on a 4-cpu server – but the server didn’t seem unreasonably slow. On the Unix servers I first used, Irix and Solaris, load average counts threads in a Runnable state. Linux, however, includes both Runnable and Uninterruptible states in the load average. This means processes waiting – on network calls using mkdir to a mounted remote server, local disk I/O – are included in the load average. As such, a high load average on Linux may indicate CPU resource contention but it may also indicate I/O contention elsewhere.

But there’s a third possibility – code that opts for the simplicity of the uninterrupted sleep without needing to be uninterruptible for a process. In our upgrade, CIFS mounts have a laundromat that I assume cleans up cache – I see four cifsd-cfid-laundromat threads in an uninterruptible sleep state – which means my load average, when the system is doing absolutely nothing, would be 4.

2023-10-03 11:11:12 [lisa@server01 ~/]# ps aux | grep " [RD]" USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND root 1150 0.0 0.0 0 0 ? D Sep28 0:01 [cifsd-cfid-laundromat] root 1151 0.0 0.0 0 0 ? D Sep28 0:01 [cifsd-cfid-laundromat] root 1152 0.0 0.0 0 0 ? D Sep28 0:01 [cifsd-cfid-laundromat] root 1153 0.0 0.0 0 0 ? D Sep28 0:01 [cifsd-cfid-laundromat] root 556598 0.0 0.0 224668 3072 pts/11 R+ 11:11 0:00 ps aux

Looking around the Internet, I see quite a few bug reports regarding this situation … so it seems like a “ignore it and wait” problem – although the load average value is increased by these sleeping threads, it’s cosmetic. Which explains why the server didn’t seem to be running slowly even through the load average was so high.

https://lkml.org/lkml/2023/9/26/1144

Date: Tue, 26 Sep 2023 17:54:10 -0700 From: Paul Aurich Subject: Re: Possible bug report: kernel 6.5.0/6.5.1 high load when CIFS share is mounted (cifsd-cfid-laundromat in"D" state) On 2023-09-19 13:23:44 -0500, Steve French wrote: >On Tue, Sep 19, 2023 at 1:07 PM Tom Talpey <tom@talpey.com> wrote: >> These changes are good, but I'm skeptical they will reduce the load >> when the laundromat thread is actually running. All these do is avoid >> creating it when not necessary, right? > >It does create half as many laundromat threads (we don't need >laundromat on connection to IPC$) even for the Windows server target >example, but helps more for cases where server doesn't support >directory leases. Perhaps the laundromat thread should be using msleep_interruptible()? Using an interruptible sleep appears to prevent the thread from contributing to the load average, and has the happy side-effect of removing the up-to-1s delay when tearing down the tcon (since a7c01fa93ae, kthread_stop() will return early triggered by kthread_stop). ~Paul