For many Java-based applications, Java KeyStore (JKS) has been the default for years. It’s familiar, widely used, and still supported. But “still supported” is not the same as “still the best choice.” If your application or platform supports it, now is a good time to move away from JKS and standardize on PKCS#12.

Why move away from JKS?

1. JKS is a proprietary format

JKS is Java-specific and tied to older Java conventions. By contrast, PKCS#12 is a standards-based format supported across platforms, tools, and vendors.

That difference matters operationally. Certificate and key material increasingly needs to work across Java applications, web servers, load balancers, cloud services, and automation tooling. PKCS#12 is far better suited to that multi-platform reality.

2. JKS has legacy security characteristics

JKS should not be considered a modern format for protecting private keys.

It uses non-standard, legacy protection mechanisms and has historically relied on weaker constructions than modern PKCS#12 implementations. As with any password-protected container, security depends heavily on password strength—but JKS offers less margin for error compared to more modern formats.

This becomes especially relevant if a keystore file is exposed. Offline password cracking is a realistic risk, and widely available tools can target JKS files—particularly when organizations use weak or reused passwords.

This does not mean existing JKS files are inherently compromised. It does mean JKS should no longer be the default when stronger, more widely supported alternatives exist.

3. Java itself moved on

The Java platform has already made this transition. Starting with Java 9, PKCS#12 became the default keystore type. JKS remains supported, but PKCS#12 is now the preferred standard in modern Java environments.

4. Many applications already support PKCS#12

In many environments, JKS persists simply because it’s what teams have always used—not because it’s required. Most modern Java frameworks, application servers, and tools support PKCS#12. For example, Tomcat has supported PKCS#12 since version 5.0, and current Java tooling handles it natively.

In practice, many applications can switch with little to no functional impact.

Why PKCS#12 is the better choice

PKCS#12 offers several clear advantages:

- Broad interoperability across platforms and vendors

- Better alignment with modern tooling and certificate automation

- Reduced reliance on Java-specific legacy formats

- Default support in current Java versions

What to do

If you manage Java applications or infrastructure, this is a good opportunity to review current keystore usage.

- Identify where JKS keystores are currently in use

- Verify whether those applications support PKCS#12

- Review vendor documentation for any requirements or constraints

- Update deployment standards to prefer PKCS#12 for new systems

- Gradually migrate existing JKS-based deployments where practical

For many use cases, converting is straightforward. For example:

keytool -importkeystore \

-srckeystore keystore.jks \

-destkeystore keystore.p12 \

-deststoretype PKCS12

Check vendor guidance

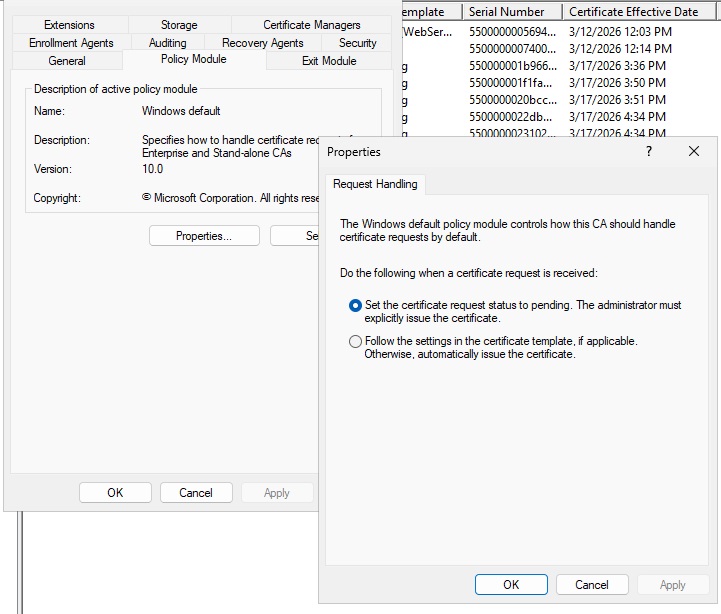

Before making changes, confirm support with the relevant application or platform vendor.

Key questions:

- Does the application support PKCS#12 for keys and certificates?

- Are there version-specific considerations?

- Are configuration changes required?

- Does the vendor recommend PKCS#12 for current deployments?

In most cases, the answer will be yes—but it’s still worth validating before making production changes.

Bottom line

JKS is not deprecated, but it is no longer the format organizations should be choosing by default.

It is a legacy, Java-specific keystore format with limited interoperability and older security characteristics. Meanwhile, PKCS#12 is standards-based, broadly supported, and the default in modern Java. If your application supports PKCS#12, prefer it. If you’re unsure, check—because in many cases, you can make the switch with minimal effort.

Choosing a modern keystore format is a small change that can meaningfully improve both security posture and operational flexibility.