I’ll prefix this with a caveat — when Zookeeper and the Kafka metadata properties topic ID values don’t match up … there’s something wrong beyond “hey, make this string the same as that string and try again”. Look for communication issues, disk issues, etc that might lead to bad data. And, lacking any cause, the general recommendation is to drop and recreate the topic.

But! “Hey, I am going to delete all the data in this Kafka topic. Everyone good with that?” is seldom answered with a resounding “of course, we don’t actually want to keep the data we specifically configured a system to keep”. And you need to keep the topic around even though something has clearly gone sideways. Fortunately, you can manually update either the zookeeper topic ID or the one on disk. I find it easier to update the one on disk in Kafka because this change can be reverted. Stop all of the Kafka servers. The following script runs on each Kafka server – identifies all of the folders within the Kafka data for the partition and changes the wrong topic ID to the right one. Then start the Kafka servers again, and the partition should be functional. For now.

#!/bin/bash

# Function to check if Kafka is stopped

check_kafka_stopped() {

if systemctl is-active --quiet kafka; then

echo "Error: Kafka service is still running. Please stop Kafka before proceeding."

exit 1

else

echo "Kafka service is stopped. Proceeding with backup and update."

fi

}

# Define the base directory where Kafka stores its data

DATA_DIR="/path/todata-kafka"

# Define the topic prefix to search for

TOPIC_PREFIX="MY_TOPIC_NAME-"

# Old and new topic IDs

OLD_TOPIC_ID="2xSmlPMBRv2_ihuWgrvQNA"

NEW_TOPIC_ID="57kBnenRTo21_VhEhWFOWg"

# Date string for backup file naming

DATE_STR=$(date +%Y%m%d)

# Check if Kafka is stopped

check_kafka_stopped

# Find all directories matching the topic pattern and process each one

for dir in $DATA_DIR/${TOPIC_PREFIX}*; do

# Construct the path to the partition.metadata file

metadata_file="$dir/partition.metadata"

# Check if the metadata file exists

if [[ -f "$metadata_file" ]]; then

# Backup the existing partition.metadata file

backup_file="$dir/partmetadata.$DATE_STR"

echo "Backing up $metadata_file to $backup_file"

cp "$metadata_file" "$backup_file"

# Use sed to replace the line containing the old topic_id with the new one

echo "Updating topic_id in $metadata_file"

sed -i "s/^topic_id: $OLD_TOPIC_ID/topic_id: $NEW_TOPIC_ID/" "$metadata_file"

else

echo "No partition.metadata file found in $dir"

fi

done

echo "Backup and topic ID update complete."

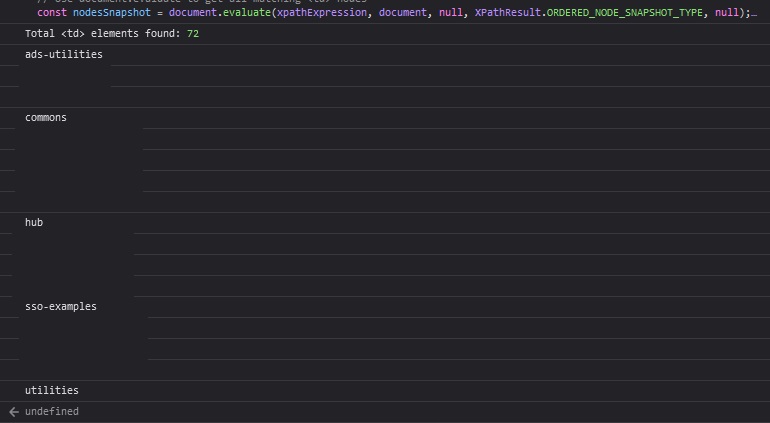

How do you get the Kafka metadata and Zookeeper topic IDs? In Zookeeper, ask it:

kafkaserver::fixTopicID # bin/zookeeper-shell.sh $(hostname):2181

get /brokers/topics/MY_TOPIC_NAME

{"removing_replicas":{},"partitions":{"2":[251,250],"5":[249,250],"27":[248,247],"12":[248,251],"8":[247,248],"15":[251,250],"21":[247,250],"18":[249,248],"24":[250,248],"7":[251,247],"1":[250,249],"17":[248,247],"23":[249,247],"26":[247,251],"4":[248,247],"11":[247,250],"14":[250,248],"20":[251,249],"29":[250,249],"6":[250,251],"28":[249,248],"9":[248,249],"0":[249,248],"22":[248,251],"16":[247,251],"19":[250,249],"3":[247,251],"10":[251,249],"25":[251,250],"13":[249,247]},"topic_id":"57kBnenRTo21_VhEhWFOWg","adding_replicas":{},"version":3}

Kafka’s is stored on disk in each folder for a topic partition:

kafkaserver::fixTopicID # cat /path/to/data-kafka/MY_TOPIC_NAME-29/partition.metadata

version: 0

topic_id: 2xSmlPMBRv2_ihuWgrvQNA

Once the data is processed, it would be a good idea to recreate the topic … but ensuring the two topic ID values match up will get you back online.